AI-Driven Network Pharmacology: Revolutionizing Natural Product Discovery through Multi-Scale Systems Analysis

This article synthesizes the transformative convergence of artificial intelligence (AI) and network pharmacology (NP) in natural product research, a field critical for researchers and drug development professionals.

AI-Driven Network Pharmacology: Revolutionizing Natural Product Discovery through Multi-Scale Systems Analysis

Abstract

This article synthesizes the transformative convergence of artificial intelligence (AI) and network pharmacology (NP) in natural product research, a field critical for researchers and drug development professionals. It explores the foundational shift from a reductionist 'one-drug-one-target' model to a holistic 'network-target-multi-component' paradigm, which aligns perfectly with the polypharmacology of plant-based medicines. The core of the discussion details the methodological workflow—from multi-source data integration using AI to predictive target identification and virtual screening—showcasing concrete applications in areas like oncology and depression. The article critically addresses persistent challenges, including data quality, reproducibility, and model interpretability, offering insights into optimization strategies and validation frameworks. Finally, it evaluates the comparative advantages of AI-enhanced NP over traditional methods and outlines a forward-looking roadmap for clinical translation and sustainable drug discovery, aiming to bridge empirical traditional knowledge with mechanism-driven precision medicine.

From Single Targets to System Networks: The Foundational Shift Powering Natural Product Research

The Limitation of the 'One-Drug-One-Target' Paradigm for Complex Natural Products

Abstract The historical ‘one-drug-one-target’ paradigm, while successful for monogenic diseases, demonstrates fundamental limitations in addressing complex, multifactorial diseases such as cancer, neurodegenerative disorders, and metabolic syndromes. This reductionist approach often fails due to network resilience, compensatory biological pathways, and the onset of drug resistance [1]. In contrast, complex natural products, with their inherent structural diversity and polypharmacology, are ideally suited for multi-target engagement. This whitepaper details the scientific limitations of the single-target model, articulates the theoretical and practical advantages of a network pharmacology framework, and provides a technical guide for integrating artificial intelligence (AI) and advanced experimental methodologies to elucidate and harness the multi-target mechanisms of natural products for next-generation drug discovery [2] [3].

The Scientific and Clinical Limitations of Single-Target Pharmacology

The traditional drug discovery pipeline has been predominantly guided by the ‘one-drug-one-target’ dogma, aiming for high-affinity, high-selectivity ligands [1]. This paradigm is pharmacologically rooted in the lock-and-key model, where a drug (key) is designed to fit a specific protein target (lock) [4]. While effective for diseases driven by a single gene or protein defect, this model exhibits critical failures when applied to complex pathophysiological states.

1.1 Network Resilience and Compensatory Mechanisms Biological systems are highly interconnected and robust networks, not simple linear pathways. Diseases like Alzheimer's, Parkinson's, and major cancers arise from the dysregulation of complex molecular networks involving genetic, proteomic, and metabolic interactions [5] [6]. Targeting a single node within such a resilient network often triggers adaptive bypass mechanisms or activation of alternative pathways, leading to insufficient therapeutic efficacy [1] [4]. This systems-level resilience explains the high attrition rate of single-target drugs in late-stage clinical trials for complex diseases.

1.2 Inevitability of Drug Resistance Drug resistance, a major challenge in oncology and antimicrobial therapy, is accelerated by the single-target approach. A selective therapeutic pressure on one target enables rapid selection for pre-existing or de novo mutations in the target protein, rendering the drug ineffective. Simultaneously targeting multiple nodes in a disease network presents a higher barrier to resistance, as a pathogen or cancer cell must concurrently evolve mutations across multiple essential targets to survive [4].

1.3 The Off-Target Toxicity Paradox Counterintuitively, the pursuit of exclusive selectivity can exacerbate safety issues. When a single target is ubiquitously expressed or shares critical functions in healthy tissues, its inhibition can lead to mechanism-based toxicities. Conversely, a natural product engaging several targets with moderate affinity may distribute its pharmacological effect across a network, potentially achieving a desired therapeutic outcome with a more tolerable side-effect profile through a “network buffering” effect [2].

Table 1: Quantitative Limitations of the Single-Target Paradigm in Complex Diseases

| Disease Category | Example Diseases | Key Limitation of Single-Target Approach | Clinical Consequence |

|---|---|---|---|

| Neurodegenerative | Alzheimer's, Parkinson's, ALS | Multiple parallel pathogenic pathways (e.g., protein aggregation, inflammation, oxidative stress) [6]. | Dozens of late-stage trial failures; symptomatic treatments only. |

| Oncological | Solid tumors, Hematologic cancers | Tumor heterogeneity, adaptive signaling, and immune evasion [1]. | High frequency of acquired resistance to kinase inhibitors and monoclonal antibodies. |

| Metabolic | Type 2 Diabetes, NAFLD | Systemic dysregulation of hormonal, metabolic, and inflammatory networks [1]. | Inability to halt disease progression with single-hormone therapies. |

| Infectious Disease | Malaria, Tuberculosis, HIV | High mutation rate of pathogens [4]. | Rapid emergence of multi-drug resistant (MDR) strains. |

Natural Products as Inherent Multi-Target Therapeutics

Natural products (NPs) are evolutionary-optimized chemical entities that interact with biological systems. Over half of all approved small-molecule drugs are derived from or inspired by natural products [1]. Their utility stems from intrinsic properties that align with network pharmacology principles.

2.1 Chemical Diversity and Polypharmacology NPs possess unparalleled scaffold diversity and structural complexity, often containing multiple chiral centers and functional groups. This enables them to interact with multiple biological targets—a property termed polypharmacology [2] [7]. A classic example is the antidepressant and analgesic natural product, resveratrol, which is reported to modulate sirtuins, NF-κB, cyclooxygenases, and antioxidant response elements [1].

2.2 Synergistic Actions in Complex Mixtures Traditional herbal medicines, such as Traditional Chinese Medicine (TCM) formulas, are prototypical multi-component, multi-target therapies. Formulas like Sini Decoction (for heart failure) contain multiple active ingredients (e.g., alkaloids, flavones) that collectively modulate a network of targets related to inflammation, apoptosis, and oxidative stress, demonstrating effects greater than the sum of their parts [2] [8]. This synergistic complexity is poorly captured by isolating single constituents.

2.3 The "Functional Structure" and Conformational Flexibility A key mechanistic insight is the concept of a "functional structure"—the three-dimensional conformation a natural product adopts when bound to a specific biomolecular target or membrane environment [7]. Flexible NP scaffolds can adopt distinct conformations to engage different targets, acting as a "skeleton key" [4]. Techniques like solid-state NMR and computational modeling are essential to elucidate these dynamic, environment-dependent conformations, moving beyond static structural depictions [7].

A Network Pharmacology & AI Framework for NP Research

Network pharmacology provides the conceptual and computational framework to transition from "one-target" to "network-target" therapeutics [2]. Artificial Intelligence accelerates every step of this pipeline, from prediction to validation [3] [9].

3.1 The Core Workflow: From NP to Network The systematic investigation of a multi-target NP involves a cyclical, integrative workflow.

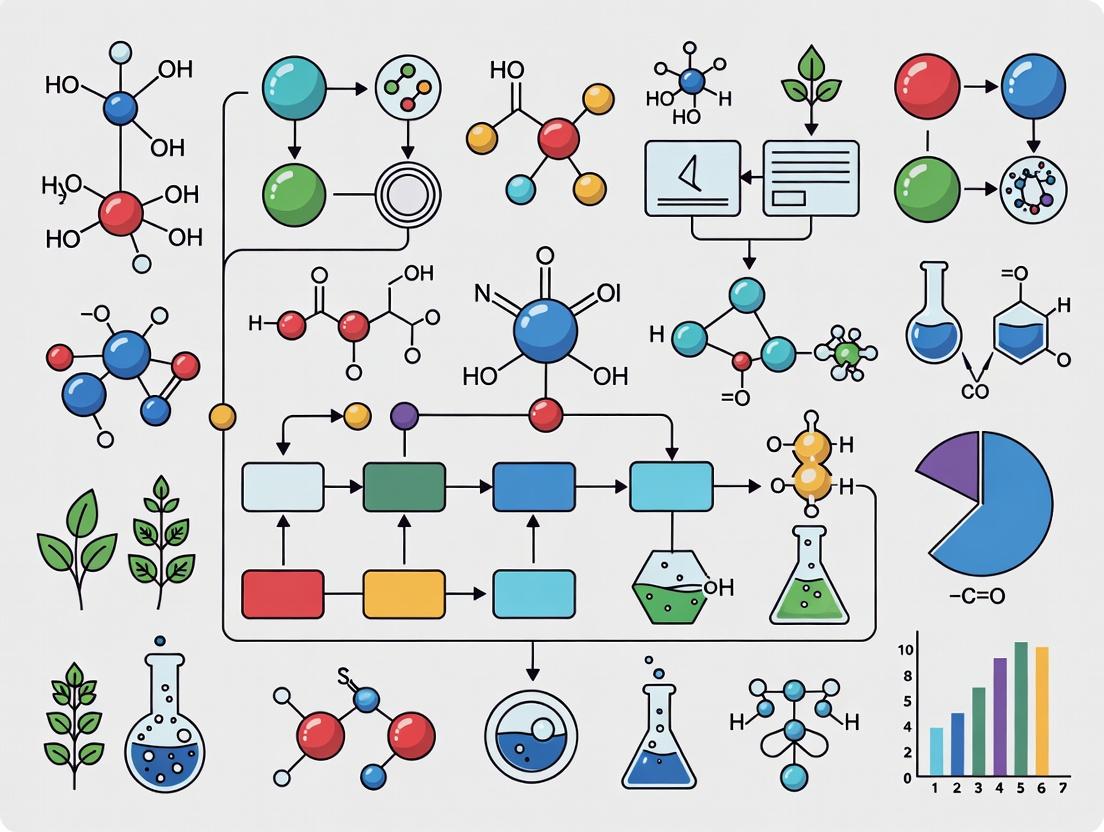

Diagram 1: Integrative Network Pharmacology & AI Workflow for NP Research.

3.2 Critical AI and Computational Methodologies

- Target Identification & Network Construction: AI models, including graph neural networks and large language models, mine literature and databases to predict NP-target interactions [3]. Tools like the RosettaVS platform enable ultra-large-scale virtual screening of billions of compounds against single or multiple protein structures, accounting for critical receptor flexibility [9]. Network analysis software (e.g., Cytoscape) integrates these predictions to map compound-target-pathway-disease networks [8].

- Synergy Prediction & Mechanism Inference: Machine learning algorithms analyze high-throughput screening data to predict synergistic or antagonistic interactions between multiple NP components. Network proximity analysis compares the network location of drug targets versus disease-associated genes to infer therapeutic potential and mechanistic insights [5] [3].

- Integrative Multi-Omics Gating: AI acts as an "operational gate" by integrating transcriptomic, proteomic, and metabolomic signatures. A promising NP candidate should reverse a disease-associated gene signature (e.g., from patient-derived cells), show engagement with predicted protein targets in proteomic assays, and induce a corresponding shift in the metabolomic profile [3].

3.3 Experimental Validation in Physiologically Relevant Models Predictions must be anchored in rigorous experiment. The choice of model system is paramount.

- Phenotypic Screening (PDD): There is a renaissance in phenotypic drug discovery for complex diseases. High-content screening (HCS) in human induced pluripotent stem cell (iPSC)-derived neurons or cardiomyocytes can capture complex disease phenotypes (e.g., protein aggregation, neurite outgrowth, rhythmic beating) and identify multi-target modulators without a priori target bias [6].

- Target Deconvolution: Following a phenotypic hit, target identification is performed. Techniques include cellular thermal shift assay (CETSA), drug affinity responsive target stability (DARTS), and phosphoproteomics to identify engaged proteins and downstream signaling effects [7].

- Functional Structure Elucidation: As noted, understanding the functional structure of NPs is critical. Solid-state NMR can determine the conformation of flexible NPs within lipid bilayers, while X-ray crystallography and cryo-EM provide atomic details of NP-protein complexes [7].

Table 2: The Scientist's Toolkit: Key Reagents & Technologies for NP Multi-Target Research

| Tool Category | Specific Technology/Reagent | Primary Function in NP Research |

|---|---|---|

| AI & Informatics | Graph Neural Networks, RosettaVS, LLMs (e.g., for TCM formula standardization) [3] [9] | Predict NP-target interactions, screen ultra-large libraries, analyze complex herb-ingredient networks. |

| Omics Technologies | RNA-seq, LC-MS/MS Proteomics, Untargeted Metabolomics with Molecular Networking [2] [3] | Provide global, unbiased data on NP-induced changes at mRNA, protein, and metabolite levels. |

| Advanced Model Systems | Disease-specific human iPSCs, 3D organoids, Microphysiological systems (Organ-on-a-chip) [6] | Provide human-relevant, phenotypic contexts for screening and validation that capture cellular interactions. |

| Target Engagement | Cellular Thermal Shift Assay (CETSA), Activity-Based Protein Profiling (ABPP) [7] | Directly confirm physical interaction between an NP and its putative protein targets in a native cellular environment. |

| Structural Biology | Cryo-Electron Microscopy, Solid-State NMR (for membrane-bound NPs) [7] | Elucidate the atomic-level "functional structure" of NPs bound to their macromolecular targets or within membranes. |

| High-Content Screening | Automated fluorescence microscopy (e.g., for neuronal morphology, protein aggregation) [6] | Enable multiparametric phenotypic analysis of NP effects in complex disease models. |

Detailed Protocol: Network Analysis for Target Identification of a Herbal Formula

This protocol, adapted from research on Sini Decoction (SND), outlines a stepwise approach to identify multi-target mechanisms [8].

Objective: To identify the key protein targets of active components in a multi-herb formulation contributing to its therapeutic effect against a complex disease (e.g., heart failure).

4.1 Stage 1: Identification of Bioavailable Active Components

- Method: Use serum pharmacochemistry. Administer the herbal formula to animal models, collect serum at various time points, and use UPLC-Q-TOF/MS to identify compounds that have been absorbed into the bloodstream (prototypes) and their metabolites.

- Data Integration: Cross-reference detected compounds with chemical databases via text mining. Use similarity matching (e.g., Tanimoto coefficient >0.8) to find structurally analogous known drugs.

- Output: A curated list of "potentially active components" (PACs) that are systemically available.

4.2 Stage 2: Network Pharmacology-Based Target Prediction

- Target Fishing: For each PAC, use multiple prediction methods:

- Text Mining: Query PubMed, ChEMBL, and BindingDB for known targets of the PAC or its analogs.

- Molecular Docking: Perform in silico docking of PACs against a human protein structure library (e.g., PDB) using a platform like RosettaVS [9]. Prioritize targets with strong predicted binding affinity and a plausible role in the disease.

- Network Construction & Enrichment:

- Build a Component-Target (C-T) network (e.g., in Cytoscape) with PACs and predicted targets as nodes.

- Input the target protein list into the STRING database to generate a Protein-Protein Interaction (PPI) network and perform GO/KEGG pathway enrichment analysis.

- Construct a Target-Pathway-Disease meta-network to visualize the therapeutic hypothesis.

4.3 Stage 3: Experimental Validation of Critical Network Nodes

- Hypothesis Selection: From the meta-network, select a central target node that is implicated by multiple PACs and sits at the intersection of several disease-relevant pathways (e.g., TNF-α in inflammation and apoptosis for heart failure) [8].

- Direct Binding Validation:

- Surface Plasmon Resonance (SPR) or Microscale Thermophoresis (MST): Purify the recombinant target protein (e.g., TNF-α) and test direct binding with isolated PACs to determine binding affinity (KD).

- Functional Cellular Validation:

- Use a disease-relevant cell-based assay. For a TNF-α target, employ a TNF-α-induced cytotoxicity assay in L929 cells.

- Pre-treat cells with individual PACs or the full formula extract, then apply a cytotoxic dose of TNF-α.

- Measure cell viability (MTT assay). A significant protective effect confirms the functional relevance of the predicted target engagement.

- Downstream, assess modulation of predicted pathway markers (e.g., caspase-3 activity for apoptosis) via western blot or ELISA.

Diagram 2: Multi-Target Network Modulation Leading to Phenotypic Correction.

Challenges and Future Perspectives

Despite its promise, the network pharmacology approach to NPs faces significant hurdles.

- Data Quality and Standardization: NP research suffers from batch variability, incomplete chemical characterization, and poorly annotated bioactivity data [2] [3]. Future efforts require "minimal information" standards for NP metadata and robust quality control.

- Computational and Conceptual Barriers: Designing drugs with "selective non-selectivity" remains a medicinal chemistry challenge [4]. AI models are limited by small, imbalanced datasets and can lack interpretability ("black box" problem) [3]. Techniques like uncertainty quantification and applicability domain assessment are needed to gate predictions.

- Validation Complexity: Deconvoluting the precise contribution of each NP component and each target interaction within a network is immensely complex. Mechanistic add-back experiments (testing combinations of isolated targets' modulators) and microphysiological systems with digital twins are promising future directions for causal validation [3] [6].

The future of natural product drug discovery lies in embracing their inherent complexity rather than forcing reductionism. By integrating network pharmacology, AI, and human-relevant experimental models, researchers can systematically decode and rationally develop these evolutionary-endowed multi-target therapies, ultimately moving beyond the limitations of the 'one-drug-one-target' paradigm to treat complex diseases.

Traditional Chinese Medicine (TCM) operates on a foundational philosophy of holism and systemic regulation, viewing the human body as an interconnected system where balance is paramount [10]. Its therapeutic approach is characterized by a "multi-component, multi-target, multi-pathway" (MCMTMP) mode of action, where combinations of natural products exert synergistic effects by modulating complex biological networks [10]. This stands in direct contrast to the conventional "single drug, single target" paradigm of Western drug discovery, which often fails to capture the therapeutic essence of TCM formulations [11].

Network pharmacology (NP) has emerged as the ideal methodological framework to decode this complexity. By constructing and analyzing "herb–component–target–disease" networks, NP aligns perfectly with TCM's holistic principles [12]. It provides a systems-level perspective that can elucidate how multiple active ingredients collectively influence an array of biological targets and pathways to restore physiological balance [13]. The convergence of NP with artificial intelligence (AI) and multi-omics technologies is now driving a transformative shift, enabling the predictive, efficient, and mechanistic validation of TCM's empirical wisdom [11]. This synergy represents a critical pathway for the modernization and global acceptance of traditional medicine, bridging ancient therapeutic concepts with cutting-edge computational and biological science [10].

Core Concepts: The Network Pharmacology Framework

At its core, network pharmacology treats biological systems as intricate networks. It maps the relationships between drugs (or herbal compounds), their protein targets, associated diseases, and biological pathways [13]. The fundamental unit of analysis is the "network target"—a subnetwork of biomolecules and interactions that is dysregulated in a disease state and can be modulated by a therapeutic agent [11]. This shifts the drug discovery focus from searching for a single "magic bullet" target to identifying key regulatory nodes within disease networks [10].

The methodology follows a structured pipeline [13]:

- Identification of active compounds from herbal formulas using pharmacokinetic filters like oral bioavailability (OB) and drug-likeness (DL).

- Prediction and collection of compound targets and disease-related targets from specialized databases.

- Construction of interaction networks, including compound-target and protein-protein interaction (PPI) networks, to identify hub targets.

- Enrichment analysis of hub targets to elucidate involved biological pathways and functions.

- Experimental validation through molecular docking, in vitro, and in vivo studies.

This framework transforms a complex TCM formula into a testable network model, allowing researchers to generate specific hypotheses about its synergistic mechanisms [12].

The AI Revolution in Network Pharmacology

Traditional NP approaches face challenges with data noise, high dimensionality, and static analysis [10]. The integration of Artificial Intelligence (AI) is overcoming these limitations, creating a more powerful AI-driven network pharmacology (AI-NP) paradigm [10]. The following table summarizes the key comparative advantages.

Table 1: Comparative Analysis of Traditional vs. AI-Driven Network Pharmacology [10]

| Comparison Dimension | Network Pharmacology | Artificial Intelligence-Network Pharmacology | Remarks and Insights |

|---|---|---|---|

| Data Acquisition | Relies on public databases (TCMSP, GeneCards); data is fragmented and updated slowly. | Integrates multimodal data (omics, EHR, text mining) for dynamic, high-dimensional fusion. | AI improves data integration depth and timeliness. |

| Algorithmic Characteristics | Based on statistics, correlation networks, and topology analysis. | Utilizes ML, DL, and Graph Neural Networks (GNN) to identify complex, non-linear patterns. | Shifts from experience-driven to data-driven discovery. |

| Model Interpretability | Good interpretability but limited handling of high-dimensional data. | Complex models can be opaque, but Explainable AI (XAI) tools (e.g., SHAP) enhance transparency. | Future models must balance predictive power with interpretability. |

| Computational Efficiency | Manual or semi-automated processing; lower efficiency. | High-throughput parallel computing; scalable to large, dynamic networks. | AI enables analysis of increasingly complex pharmacological systems. |

| Clinical Translation | Focuses on mechanistic, preclinical studies. | Integrates clinical big data for precision prediction and patient stratification. | AI-NP better bridges experimental research and clinical application. |

- Machine Learning (ML) & Deep Learning (DL): These techniques excel at predicting drug-target interactions (DTIs) from chemical and genomic data. Models can screen millions of compounds, vastly accelerating the identification of active components from TCM libraries [10]. DL is also used for de novo molecular design, optimizing lead compounds for better efficacy and safety [12].

- Graph Neural Networks (GNNs): GNNs are uniquely suited for NP because they operate directly on graph-structured data. They can learn powerful representations from the "compound-target-disease" network itself, predicting novel therapeutic associations, identifying critical network modules, and uncovering latent synergy mechanisms [10] [11].

- Multi-modal Data Integration: AI-NP leverages not just curated databases but also raw, high-throughput data. It integrates transcriptomics, proteomics, metabolomics, and clinical phenomics to construct multi-scale, dynamic network models that reflect the true biological state before and after treatment [11] [12].

Diagram 1: AI-Enhanced Network Pharmacology Data Integration Workflow

Methodological Guide: From Network Construction to Experimental Validation

A rigorous, multi-step workflow is essential for credible NP research. Below is a detailed protocol integrating AI-enhanced steps.

Network Construction & Analysis Protocol

Phase 1: Data Curation & Active Compound Screening

- Source Herbal Components: For a TCM formula (e.g., Shengmai San), extract all chemical constituents from databases like TCMSP (https://tcmsp-e.com) or ETCM 2.0 (http://www.tcmip.cn/ETCM) [12] [13].

- PK/PD Screening: Apply Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) filters. Standard criteria include Oral Bioavailability (OB) ≥ 30% and Drug-likeness (DL) ≥ 0.18 to prioritize compounds with feasible pharmacokinetic profiles [13].

- Target Identification:

- For known targets: Retrieve from TCMSP, HIT, or DrugBank [13].

- For novel prediction: Use AI-powered tools. Input compound SMILES or structures into:

- Disease Target Retrieval: Obtain genes associated with the disease (e.g., myocardial infarction) from DisGeNET, GeneCards, or OMIM [13].

- Intersection & PPI Network: Take the intersection of compound-predicted and disease-related targets. Input these "potential therapeutic targets" into STRING or similar to build a Protein-Protein Interaction (PPI) network. Analyze topology (degree, betweenness centrality) using Cytoscape (v3.10.2) to identify hub genes [12] [13].

Phase 2: AI-Enhanced Network Modeling & Pathway Analysis

- Functional Enrichment: Perform Gene Ontology (GO) and Kyoto Encyclopedia of Genes and Genomes (KEGG) pathway analysis on hub targets using clusterProfiler R package or DAVID. Identify significantly enriched biological processes and pathways (adjusted p-value < 0.05) [13].

- Construct Comprehensive Network: Build a visual "Herb-Compound-Target-Pathway" network in Cytoscape.

- Apply GNN Analysis: For advanced studies, export the network and apply a Graph Neural Network (e.g., using PyTorch Geometric) to perform node/graph classification, identify critical network modules, or predict novel compound-disease links that might be missed by traditional topology measures [10] [11].

Diagram 2: Core NP Workflow from Data to Validation

Experimental Validation Protocol

- In Silico Validation - Molecular Docking:

- Preparation: Download 3D structures of key hub target proteins (e.g., AKT1, TNF-α) from the Protein Data Bank (PDB). Prepare the protein (remove water, add hydrogens, assign charges) using AutoDock Tools or PyMOL.

- Ligand Preparation: Obtain 3D structures of top candidate compounds from PubChem, optimize their geometry, and set torsional bonds.

- Docking Simulation: Perform docking using AutoDock Vina or Schrödinger Glide. Set the docking grid box to encompass the protein's active site.

- Analysis: Evaluate binding affinity (kcal/mol). A score ≤ -5.0 kcal/mol typically suggests strong binding. Visually analyze binding poses for key interactions (hydrogen bonds, hydrophobic contacts) [13].

- In Vitro Validation:

- Cell-based Assays: Treat relevant disease cell models (e.g., H9c2 cardiomyocytes for ischemia) with the TCM compound or formula extract.

- Validation of Targets/Pathways: Use qPCR and Western Blot to measure mRNA and protein expression of predicted hub targets (e.g., PI3K, Bcl-2, Caspase-3). For pathway activity, use phospho-specific antibodies in Western blot or pathway-specific luciferase reporter assays.

- Phenotypic Assays: Perform functional assays aligned with predicted mechanisms: CCK-8 for cell viability, flow cytometry for apoptosis, ELISA for inflammatory cytokines (IL-6, TNF-α), and DCFH-DA probe for ROS detection [13].

- In Vivo Validation:

- Use a disease animal model (e.g., left anterior descending coronary artery ligation in rats for myocardial infarction).

- Administer the TCM intervention. Collect tissue samples (e.g., heart) for histopathological analysis (H&E staining), immunohistochemistry of target proteins, and multi-omics validation (e.g., transcriptomics sequencing) to confirm network predictions at a systems level [11] [12].

Case Study: Network Pharmacology in TCM Cardiology Research

TCM herbs like Astragali Radix (Huangqi), Ginseng Radix (Renshen), and Salviae Miltiorrhiza Radix (Danshen) are cornerstones of cardioprotective formulas [13]. NP studies have systematically decoded their mechanisms:

- Anti-inflammatory & Anti-apoptosis: Networks for these herbs consistently highlight targets like TNF-α, IL-6, Bcl-2, and Caspase-3, converging on pathways such as PI3K-AKT and MAPK signaling [13]. This validates the multi-target approach in mitigating myocardial injury.

- Synergistic Formula Analysis: A study on Dengzhan Shengmai Capsule for ischemic stroke integrated NP with transcriptomics and metabolomics. It revealed the formula concurrently modulated neuroinflammatory injury (via IL-17 signaling) and thrombosis (via platelet activation), demonstrating treatment of the disease network from multiple angles [11].

- Dose-Response & Toxicity: NP integrated with metabolomics has been used to map the hepatotoxicity network of specific components like Polygonum multiflorum, identifying key metabolic pathways involved and potential detoxifying strategies [11].

These cases exemplify how NP moves beyond ingredient lists to reveal the logic of synergy and provide a systems-level understanding of efficacy and safety.

Table 2: Key Research Reagent Solutions for Network Pharmacology Studies [12] [13]

| Category | Item / Resource | Function / Description | Example Sources / Tools |

|---|---|---|---|

| Databases | TCMSP | Primary database for TCM compounds, ADMET properties (OB, DL), and known targets. | https://tcmsp-e.com |

| ETCM 2.0 | Integrated platform for formulas, herbs, compounds, targets, and diseases. | http://www.tcmip.cn/ETCM | |

| GeneCards & DisGeNET | Comprehensive sources for disease-associated genes and targets. | https://www.genecards.org; https://www.disgenet.org | |

| STRING | Database of known and predicted PPI for network construction. | https://string-db.org | |

| Software & Platforms | Cytoscape | Open-source platform for visualizing, analyzing, and modeling molecular interaction networks. | https://cytoscape.org |

| AutoDock Vina | Widely used program for molecular docking simulations. | http://vina.scripps.edu | |

| R (clusterProfiler) | Statistical computing environment for GO and KEGG enrichment analysis. | https://www.r-project.org | |

| PyTorch Geometric | Library for building and training GNNs on graph-structured data. | https://pytorch-geometric.readthedocs.io | |

| Experimental Reagents | CCK-8 / MTT Assay Kits | Measure cell viability and proliferation to validate cytotoxic or protective effects. | Various commercial suppliers (Sigma, Dojindo) |

| Annexin V-FITC/PI Apoptosis Kit | Detect apoptotic cell populations via flow cytometry. | Various commercial suppliers (BD Biosciences, Thermo Fisher) | |

| Pathway-Specific Antibody Panels | Validate protein expression and phosphorylation of predicted hub targets (e.g., PI3K/AKT, MAPK). | Cell Signaling Technology, Abcam | |

| ELISA Kits for Cytokines | Quantify secreted inflammatory mediators (e.g., TNF-α, IL-1β, IL-6). | R&D Systems, BioLegend |

The integration of NP with dynamic multi-omics profiling, AI, and real-world clinical data (EHRs) represents the future of TCM research [10] [11]. Key frontiers include:

- Temporal & Spatial Network Modeling: Moving from static snapshots to dynamic network models that capture the progression of disease and treatment response over time [10].

- Precision Herbal Medicine: Using AI-NP to stratify patient populations based on their "network dysfunction" profile and match them with optimized, personalized TCM formulations [11].

- Sustainable Drug Discovery: AI-driven virtual screening and de novo design dramatically reduce the resource-intensive trial-and-error process of screening natural product libraries, making discovery more efficient and sustainable [12].

In conclusion, network pharmacology provides the essential theoretical and methodological bridge between TCM's holistic philosophy and modern systems biology. Its synergy with AI and multi-omics technologies is not merely an upgrade but a paradigm shift, enabling the translation of centuries of empirical knowledge into mechanistically clear, clinically actionable, and globally resonant scientific discoveries. This synergistic approach firmly positions network pharmacology as the ideal and indispensable framework for the next era of traditional medicine research.

The study of biological systems has evolved from a reductionist focus on individual molecules to a holistic paradigm that seeks to understand the complex interactions within cells and organisms [14]. This systems biology approach is fundamentally enabled by omics technologies—high-throughput methods for characterizing collective molecular pools such as the genome, proteome, and metabolome [14]. These technologies generate vast, multidimensional data that, when integrated, allow researchers to model biological systems as interconnected networks rather than linear pathways.

This paradigm is particularly transformative for network pharmacology, especially in the realm of natural product research. Traditional medicine systems, like Traditional Chinese Medicine (TCM), operate on a "multi-component, multi-target, multi-pathway" principle, which aligns perfectly with a network-based understanding of disease and therapeutic intervention [10]. Isolating a single active compound is often insufficient to explain the efficacy of a natural product formulation; instead, synergistic effects across multiple biological scales must be elucidated [10]. Omics data provides the foundational layers for constructing the biological networks that map these interactions—from genetic predispositions and protein expressions to metabolic fluxes.

The integration of artificial intelligence (AI), including machine learning (ML) and graph neural networks (GNNs), with network pharmacology has created a powerful framework known as AI-driven network pharmacology (AI-NP) [10]. This framework uses multi-omics data to build, analyze, and dynamically model complex biological networks, enabling the prediction of drug targets, the elucidation of therapeutic mechanisms for natural products, and the identification of novel biomarker signatures. This whitepaper provides a technical guide to the core omics disciplines—genomics, proteomics, and metabolomics—detailing their methodologies, their integration for network construction, and their pivotal role within the AI-NP paradigm for advancing natural product research.

Core Omics Technologies: Methodologies and Data Generation

Genomics and Transcriptomics

Genomics involves the sequencing and analysis of an organism's complete DNA content, encompassing both coding genes and non-coding regulatory regions [14] [15]. Next-Generation Sequencing (NGS) technologies have revolutionized the field, enabling fast, cost-effective whole-genome sequencing that supports genome-wide association studies (GWAS), variant discovery, and the identification of potential drug targets [14].

- Key Method - Whole Genome Sequencing (WGS): WGS involves fragmenting the genomic DNA, attaching adapters, and performing massively parallel sequencing (e.g., Illumina platforms). The resulting short reads are computationally aligned and assembled against a reference genome to identify single nucleotide polymorphisms (SNPs), insertions/deletions (indels), and structural variants [14].

- Transcriptomics, an extension of genomics, studies the complete set of RNA transcripts. RNA-Sequencing (RNA-Seq) is the dominant technique, where cDNA libraries are prepared from RNA and sequenced. This provides a quantitative snapshot of gene expression (mRNA abundance), alternative splicing events, and the expression of non-coding RNAs [14].

- Advanced Frontiers: Single-Cell RNA-Seq (scRNA-seq) technologies (e.g., 10x Genomics Chromium) dissect cellular heterogeneity by profiling gene expression in individual cells, revealing rare cell types and dynamic state transitions [14]. Spatial Transcriptomics (e.g., 10x Visium) preserves the spatial context of gene expression within a tissue section, linking molecular profiles to tissue morphology and cellular neighborhood [14].

Proteomics, Glycoproteomics, and Glycomics

Proteomics is the large-scale study of proteins, including their expression levels, post-translational modifications (PTMs), and interactions [14] [15]. Mass spectrometry (MS) is the cornerstone technology. The workflow typically involves protein extraction, digestion into peptides, chromatographic separation (LC), and analysis by a tandem mass spectrometer (LC-MS/MS) [14].

- Key Method - Bottom-Up LC-MS/MS Proteomics: Proteins are extracted from a sample (e.g., tissue, biofluid) and digested with an enzyme like trypsin. Peptides are separated by liquid chromatography and ionized (e.g., via electrospray). The mass spectrometer measures the mass-to-charge ratio (m/z) of peptides (MS1 scan) and then selects specific ions for fragmentation to generate MS2 spectra. Computational pipelines match these spectra to protein sequence databases for identification and quantification [14].

- PTM Analysis: Specific proteomic workflows enrich for modified peptides (e.g., phosphopeptides, glycopeptides) to study PTMs like phosphorylation and glycosylation, which are critical for protein function and signaling [14]. Top-down proteomics, which analyzes intact proteins, provides a comprehensive view of combinatorial PTMs on a single protein molecule [14].

Metabolomics

Metabolomics focuses on profiling the small-molecule metabolites (typically <1,500 Da) within a biological system, representing the most downstream product of genomic and proteomic activity [14] [15]. The metabolome is highly dynamic and responsive to environmental and physiological changes.

- Key Method - Untargeted Metabolomics by LC-MS: Metabolites are extracted using solvents. Separation is achieved via liquid chromatography (e.g., reversed-phase or hydrophilic interaction chromatography). High-resolution mass spectrometry detects thousands of metabolite features. Data processing involves feature detection, alignment, and statistical analysis to distinguish metabolite profiles between experimental groups [14] [15].

- Metabolite Identification remains a challenge. It relies on matching MS/MS fragmentation patterns and chromatographic retention times to authentic standards in curated libraries. Pathway analysis tools (e.g., MetaboAnalyst) map identified metabolites to biochemical pathways for functional interpretation [16].

Table 1: Comparative Overview of Core Omics Technologies

| Omics Layer | Analytical Target | Primary Technologies | Key Outputs | Scale & Throughput |

|---|---|---|---|---|

| Genomics | DNA sequence, structure, variation | Next-Generation Sequencing (NGS), Long-read sequencing (PacBio, Nanopore) | Genetic variants (SNPs, CNVs), genome structure, epigenetic marks | Entire genome (3×10⁹ bp for human); very high throughput [14] |

| Transcriptomics | RNA abundance & sequence | RNA-Seq, Single-Cell RNA-Seq, Spatial Transcriptomics | Gene expression levels, splicing isoforms, novel transcripts | Whole transcriptome (~20,000 coding genes); high throughput [14] |

| Proteomics | Protein identity, quantity, modification | Mass Spectrometry (LC-MS/MS), Antibody arrays, Top-down MS | Protein expression, post-translational modifications (PTMs), protein complexes | 10,000+ proteins per run; moderate to high throughput [14] |

| Metabolomics | Small-molecule metabolites | GC-MS, LC-MS, NMR | Metabolite identification and relative/absolute concentration | 100s-1000s of metabolites per run; high throughput [14] [15] |

Data Integration for Biological Network Construction

Stand-alone omics analyses provide a limited view. Multi-omics integration is essential to construct comprehensive biological networks that reveal causal relationships across molecular layers [14] [16]. Integration strategies can be pathway-, network-, or correlation-based.

- Pathway- or Ontology-Based Integration: Tools like MetaboAnalyst and iPEAP map genes, proteins, and metabolites onto predefined biochemical pathways (e.g., KEGG, Reactome) [16]. This identifies pathways significantly enriched with altered molecules across omics layers, offering a biologically contextualized but predefined network view.

- Biological Network-Based Integration: This method constructs networks from known molecular interactions. Tools like Cytoscape with its MetScape plugin or SAMNetWeb integrate protein-protein interactions, gene regulatory networks, and metabolic reactions to create a unified interaction graph [16]. Omics data is overlaid on this graph to identify active network modules.

- Empirical Correlation-Based Integration: When prior knowledge is sparse, statistical correlations are computed across omics datasets. Methods like Weighted Gene Co-expression Network Analysis (WGCNA) identify highly correlated clusters (modules) of genes, proteins, and metabolites that may function together [16]. Multi-block PLS and similar multivariate methods find latent variables that explain covariance between different omics datasets [16].

Table 2: Software Tools for Multi-Omics Data Integration and Network Analysis [16]

| Tool Name | Primary Integration Method | Accepted Data Types | Key Features | Complexity |

|---|---|---|---|---|

| MetaboAnalyst | Pathway Enrichment | Transcriptomics, Metabolomics | Comprehensive metabolomics processing, integrated pathway analysis, user-friendly web interface | Low [16] |

| Cytoscape / MetScape | Biological Network | Gene Expression, Metabolite Data | Visualizes gene-metabolite networks, performs pathway enrichment within a powerful network analysis platform | Moderate [16] |

| WGCNA | Empirical Correlation | Any (Genomics, Proteomics, etc.) | Identifies co-expression modules, relates modules to clinical traits, robust network topology analysis | High [16] |

| mixOmics | Multivariate/Correlation | Any heterogeneous datasets | Provides multiple multivariate methods (sPLS, rCCA) for identifying correlated variables across datasets | High [16] |

| Grinn | Hybrid (Graph Database) | Genomics, Proteomics, Metabolomics | Uses a graph database (Neo4j) to flexibly integrate biological and empirical relationships dynamically | High [16] |

AI-Driven Network Pharmacology: A Framework for Natural Product Research

Network pharmacology (NP) provides the conceptual framework to understand polypharmacology, while artificial intelligence (AI) provides the computational engine to implement it at scale and with predictive power [10]. AI-NP addresses the limitations of conventional NP, such as handling noisy, high-dimensional data and capturing dynamic interactions [10].

- AI/ML Methodologies in the Pipeline:

- Target Prediction: ML models (e.g., Random Forest, Support Vector Machines) are trained on chemical structure descriptors and known target interactions to predict novel targets for natural product compounds [10].

- Network Inference: Graph Neural Networks (GNNs) operate directly on biological network structures. They can predict missing interactions, infer node properties (e.g., essentiality of a protein), or identify disease-relevant subnetworks by learning from multi-omics features associated with each node (gene/protein/metabolite) [10].

- Mechanism Elucidation: AI models integrate multi-omics data from in vitro or in vivo experiments treated with a natural product. By comparing network states (e.g., gene/protein co-expression networks) before and after treatment, AI can identify key altered network modules and upstream regulators, suggesting a mechanism of action [10].

- Synergy Prediction: DL models analyze the complex, non-linear relationships between the chemical features of multiple compounds in a formulation and their combined biological effects (e.g., transcriptomic response), predicting synergistic combinations [10].

Table 3: Comparison of Conventional vs. AI-Driven Network Pharmacology [10]

| Comparison Dimension | Conventional Network Pharmacology | AI-Driven Network Pharmacology (AI-NP) |

|---|---|---|

| Data Acquisition & Integration | Relies on static public databases; manual, fragmented integration. | Integrates dynamic, multimodal data (omics, EMR, literature) automatically via NLP and data fusion algorithms. |

| Algorithmic Core | Based on statistical correlation and network topology analysis. | Employs ML, DL, and GNNs to learn complex, non-linear patterns from data. |

| Model Interpretability | Generally high, as networks are built from known interactions. | Can be low ("black box"); requires Explainable AI (XAI) techniques (e.g., SHAP, attention mechanisms). |

| Computational Scalability | Limited, often manual or semi-automated; struggles with big data. | High-throughput, parallelizable; designed for large-scale biological networks and omics data. |

| Dynamic Modeling | Typically generates static "snapshot" networks. | Capable of modeling temporal dynamics and network perturbations over time. |

| Clinical Translation | Focus on mechanistic hypothesis generation; indirect clinical link. | Direct integration with clinical big data (EHRs, RWD) for predictive biomarker and patient stratification models. |

Experimental Protocol: A Multi-Omics Workflow for Natural Product Mechanism Elucidation

This protocol outlines a systematic, multi-omics experiment to investigate the mechanism of action (MoA) of a natural product extract in vitro.

Study Design and Sample Preparation

- Cell Model Selection: Choose a disease-relevant cell line (e.g., hepatic carcinoma cell line for a liver-tonic herbal medicine).

- Treatment Groups: Seed cells and divide into: (a) Vehicle control group (treated with solvent, e.g., DMSO), (b) Natural Product treatment group (treated with IC₂₀ or IC₅₀ concentration of the extract, determined by prior viability assay), and (c) Positive control group (treated with a standard drug, if available). Use at least 3-6 biological replicates per group.

- Treatment Duration: Treat cells for a relevant time course (e.g., 6, 12, 24 hours) to capture early and late molecular responses.

- Sample Harvest: At each time point, wash cells with PBS and harvest.

- For genomics/transcriptomics: Lyse cells directly in RNA/DNA stabilization reagent.

- For proteomics/metabolomics: Rapidly quench metabolism, lyse cells, and snap-freeze pellets in liquid nitrogen. Store all samples at -80°C.

Multi-Omics Profiling

- Transcriptomics: Extract total RNA, check quality (RIN > 8.5), prepare stranded cDNA libraries, and perform sequencing on an Illumina platform (e.g., NovaSeq) to a depth of ~30 million paired-end reads per sample.

- Proteomics: Lyse cell pellets in RIPA buffer with protease/phosphatase inhibitors. Digest proteins with trypsin. Desalt peptides and perform LC-MS/MS on a high-resolution instrument (e.g., Orbitrap Exploris 480 with FAIMS). Use data-dependent acquisition (DDA) or data-independent acquisition (DIA) modes.

- Metabolomics: Extract metabolites from pellets using a cold methanol/water/chloroform method. Dry extracts and reconstitute for LC-MS analysis. Run samples in both positive and negative ionization modes on a platform like a Q-Exactive HF mass spectrometer coupled to a HILIC column.

Data Processing and Network Construction & Analysis

- Primary Data Analysis:

- RNA-Seq: Align reads to reference genome (e.g., STAR aligner), quantify gene counts (featureCounts), perform differential expression analysis (DESeq2).

- Proteomics: Process raw files with software (e.g., Proteome Discoverer, DIA-NN, or MaxQuant). Identify and quantify proteins. Perform statistical analysis (e.g., with

limma). - Metabolomics: Process with software (e.g., Compound Discoverer, XCMS). Annotate metabolites using MS/MS libraries. Perform multivariate stats (PCA, OPLS-DA).

- Multi-Omics Network Construction:

- Map significantly altered genes (log2FC >1, adj. p < 0.05), proteins, and metabolites (VIP >1, p < 0.05) onto a knowledge graph. Use a tool like Grinn or Cytoscape with integrated databases (e.g., STRING for PPI, KEGG for pathways) [16].

- Perform WGCNA on the transcriptomics data to identify co-expression modules. Correlate module eigengenes with proteomic and metabolomic profiles to find multi-omics modules [16].

- AI-NP Analysis for MoA:

- Use the integrated network as input for a GNN model. Train the GNN to classify nodes (e.g., genes/proteins) as "treatment-responsive" based on their multi-omics features and network position.

- Apply GNN Explainability (e.g., GNNExplainer) to identify the most influential subnetworks and nodes driving the model's prediction. This subnetwork represents the core mechanistic network of the natural product's action.

- Validate key predictions (e.g., a central regulatory protein) using orthogonal methods like siRNA knockdown followed by functional assays.

The Scientist's Toolkit: Essential Reagents and Materials

| Category | Item | Function in Omics Experiments |

|---|---|---|

| Sample Preparation | Tri-Reagent (or similar) | Simultaneous extraction of RNA, DNA, and protein from a single biological sample, crucial for matched multi-omics analysis. |

| RIPA Lysis Buffer with Protease/Phosphatase Inhibitors | Efficient lysis of cells/tissues for proteomics while preserving protein integrity and phosphorylation states. | |

| Cold Methanol/Acetonitrile (80%) | Quenches metabolic activity instantly and extracts polar and semi-polar metabolites for metabolomics. | |

| Sequencing & MS | Illumina-Compatible Library Prep Kits (e.g., TruSeq) | Prepares cDNA libraries from RNA with appropriate adapters for next-generation sequencing on Illumina platforms [14]. |

| Trypsin (Sequencing Grade) | Enzyme for digesting proteins into peptides for bottom-up proteomics. Its specificity allows for reliable database searching. | |

| C18 Solid-Phase Extraction (SPE) Cartridges | Desalts and purifies peptide or metabolite samples prior to LC-MS, reducing ion suppression and improving data quality. | |

| Chromatography | C18 Reverse-Phase LC Columns | The standard column for separating peptides (proteomics) and hydrophobic metabolites in LC-MS systems. |

| HILIC (Hydrophilic Interaction) Columns | Essential for retaining and separating polar metabolites that are poorly retained by reverse-phase chromatography in metabolomics. | |

| Data Analysis | Internal Standards (e.g., Heavy-labeled peptides/amino acids) | Spiked into samples for proteomics/metabolomics to correct for technical variability during sample processing and MS analysis. |

| Mass Spectral Libraries (e.g., NIST, mzCloud, GNPS) | Collections of reference MS/MS spectra for metabolite identification by spectral matching in metabolomics. | |

| Curated Pathway Databases (e.g., KEGG, Reactome) | Provide the biological context (pathways, interactions) essential for integrating omics data and constructing networks [16]. |

The integration of genomics, proteomics, and metabolomics is fundamental to building the high-resolution, multi-layered biological networks that underpin modern systems pharmacology. For natural product research, this integration, powered by AI, moves the field beyond phenomenological observation to mechanistic, network-level understanding. The future of AI-NP lies in enhancing temporal and spatial resolution (e.g., integrating single-cell and spatial omics), improving model interpretability via XAI, and strengthening the link to clinical outcomes through integration with real-world data. The continued development of this framework promises to unlock the systemic therapeutic potential of natural products in a precise and evidence-based manner.

The investigation of natural products, particularly within systems like Traditional Chinese Medicine (TCM), presents a unique paradox: immense therapeutic potential obscured by profound mechanistic complexity. The classical "one drug, one target" paradigm of modern pharmacology falters when confronted with herbs containing hundreds of chemicals, each capable of interacting with multiple biological targets. Network pharmacology has emerged as the essential framework to navigate this complexity, shifting the focus from isolated components to system-level interactions [10]. This approach aligns perfectly with the holistic principles of TCM, aiming to decode the "multi-component, multi-target, multi-pathway" mode of action that characterizes herbal medicine [10].

The advent of Artificial Intelligence (AI) has catalyzed a transformative leap in this field. AI-driven network pharmacology (AI-NP) leverages machine learning (ML), deep learning (DL), and graph neural networks (GNNs) to process high-dimensional, multi-source biological data, enabling predictions and insights beyond the reach of traditional statistical methods [10]. This confluence of disciplines provides the tools to systematically deconstruct and analyze the core 'Herb-Component-Target-Disease-Pathway' network. Such models move beyond simple association lists to capture the topological relationships within biological networks, offering a predictive, scientifically-grounded understanding of herbal efficacy. For instance, a network medicine framework revealed that the therapeutic effectiveness of an herb for a symptom can be predicted by the network proximity of the herb's protein targets to the module of proteins associated with that symptom in the human protein interactome [17]. This manuscript serves as a technical guide to this core conceptual model, detailing its computational architecture, experimental validation, and integration with AI, thereby situating it within the broader thesis of modernizing natural product research.

Core Conceptual Model Breakdown

The 'Herb-Component-Target-Disease-Pathway' model is not a linear pathway but a multi-layered, interconnected network. Deconstructing it involves integrating heterogeneous data into a unified computational framework that can quantify and predict relationships.

Data Layer: Integration of Multi-Source Biological Data

The model's foundation is built on curated data linking each entity. Key public databases serve as critical resources for constructing these networks [18] [17] [10].

Table 1: Core Data Sources for Network Construction

| Data Type | Key Databases | Description & Role in Model | Example Scale |

|---|---|---|---|

| Herb-Disease Associations (HDAs) | HERB, TCMID [18] | Known therapeutic relationships forming the gold-standard for training and validation. | 4,260 associations between 25 herbs and 400 diseases [18]. |

| Herb-Component (Ingredient) | HERB, TCMIO [18] [17] | Links herbs to their chemical constituents. | 2,059 ingredients associated with studied herbs [18]. |

| Component-Target | HIT 2.0, STITCH [17] | Identifies protein targets of herbal chemicals, often via text-mining and manual curation. | HIT 2.0 links 798 herbs to 2,270 protein targets [17]. |

| Target-Pathway | KEGG, Gene Ontology (GO) [18] | Places protein targets into functional context (biological pathways, processes). | Used to calculate functional similarity between herbs or diseases. |

| Disease/Symptom-Gene | Disease ontology, Symptom-gene datasets [17] | Links diseases or TCM symptoms to associated proteins/genes. | 174 symptoms with ≥20 associated proteins form network modules [17]. |

| Protein-Protein Interactions (PPI) | Human Protein Interactome [17] | The scaffold network defining functional distances between targets and disease modules. | Essential for calculating network proximity metrics. |

Computational Kernel Layer: Measuring Multi-Faceted Similarity

A pivotal innovation in modern HDA prediction is the use of kernel-based methods. Kernels are similarity matrices that quantify relationships between entities (herbs or diseases) based on different profiles. The HDAPM-NCP model, for example, constructs multiple kernels for herbs and diseases before fusion [18].

Table 2: Kernel Functions for Herb and Disease Representation

| Kernel Name | Entity | Basis for Calculation | Mathematical Formulation (Gaussian IP Kernel) | Biological Interpretation |

|---|---|---|---|---|

| GIP Kernel based on HDA | Herb | Known disease association profile. | ( K{HGIP}^{HD}(Hi, Hj) = exp(-\partial{HD} | HD(Hi) - HD(Hj) |^2 ) ) [18] | Herbs with similar therapeutic applications are considered similar. |

| GIP Kernel based on Ingredients | Herb | Chemical composition profile. | ( K{HGIP}^{HI}(Hi, Hj) = exp(-\partial{HI} | HI(Hi) - HI(Hj) |^2 ) ) [18] | Herbs sharing chemical constituents are considered similar. |

| GIP Kernel based on Targets | Herb | Protein target profile (e.g., from reference mining or high-throughput data). | ( K{HGIP}^{HT}(Hi, Hj) = exp(-\partial{HT} | HT(Hi) - HT(Hj) |^2 ) ) [18] | Herbs modulating overlapping sets of proteins are considered similar. |

| Semantic Similarity Kernel | Disease | Disease ontology (MeSH) structure. | Calculated from the distance between disease terms in a directed acyclic graph. | Diseases sharing closer ancestry in the ontology are more similar. |

| Function Similarity Kernel | Disease | Shared GO terms or KEGG pathways of associated genes. | Based on the overlap of enriched functional annotations. | Diseases with dysregulated common biological processes are similar. |

These individual kernels are then fused into a unified herb kernel and a unified disease kernel using methods like average weighting or multiple kernel learning, providing a comprehensive similarity measure that incorporates all available data perspectives [18].

Network Proximity Layer: The Topological Principle of Action

Beyond direct associations, the model incorporates network topology via the human protein-protein interactome (PPI). The core hypothesis is that the therapeutic effect of an herb is a function of the network distance between its targets and the disease module (the local neighborhood of proteins associated with a disease or symptom) [17]. The critical metric is the average shortest path length ((d_{s,t})) between herb targets and disease/symptom proteins within the PPI. A significant shortening of this distance compared to random expectation indicates a higher likelihood of therapeutic association [17]. This principle bridges TCM's symptom-based treatment and modern systems biology, explaining efficacy even when herb targets do not directly overlap with disease genes but instead influence the network neighborhood.

AI-Driven Predictive Layer: From Features to Forecasts

This layer integrates the constructed features (kernels, network proximities) to predict novel associations. AI models, particularly Graph Neural Networks (GNNs) and bilinear decoders, excel here. They can learn low-dimensional embeddings for herbs and diseases directly from heterogeneous networks (e.g., herb-ingredient-target-disease graphs) and then score potential pairs [10]. This represents a shift from feature engineering to representation learning, where the model itself discovers the most informative patterns for prediction.

Table 3: Comparison of Traditional NP vs. AI-Driven NP (AI-NP)

| Comparison Dimension | Traditional Network Pharmacology | AI-Driven Network Pharmacology | Impact on Model Performance |

|---|---|---|---|

| Data Acquisition & Integration | Relies on manual curation from fragmented public databases; static. | Integrates multimodal, high-dimensional data (omics, EMR) dynamically [10]. | Enhances completeness and reduces bias in the foundational network. |

| Algorithmic Core | Based on statistical correlation and topology analysis (e.g., centrality). | Utilizes ML/DL/GNN to automatically identify complex, non-linear patterns [10]. | Improves predictive accuracy and generalizability to novel associations. |

| Model Interpretability | High; relationships are directly visible in constructed networks. | Often lower ("black box"), but improved by Explainable AI (XAI) tools like SHAP [10]. | Balances predictive power with mechanistic insight is a key challenge. |

| Computational Scalability | Limited, manual or semi-automated processes. | High-throughput, parallel computing suitable for large-scale network analysis [10]. | Enables screening of entire herbomes against disease genomes. |

| Clinical Translational Potential | Focused on mechanistic hypothesis generation for preclinical study. | Can integrate real-world data (RWD) for precision prediction and patient stratification [10]. | Bridges the gap between network models and clinical outcomes. |

Experimental & Computational Protocols

Protocol 1: Construction and Validation of a Kernel-Based HDA Prediction Model (HDAPM-NCP)

This protocol outlines the steps for building a state-of-the-art prediction model as described in Scientific Reports (2025) [18].

Dataset Curation:

- Source initial herb-disease associations from a comprehensive database like HERB .

- Apply stringent filters: select herbs with high-throughput experimental support and diseases with reference-mined associations and valid MeSH IDs.

- Split the final set of known associations (positive samples) and an equal number of unknown pairs (negative samples) for benchmarking.

Multi-Kernel Construction:

- For herbs, calculate the six GIP kernels based on: (i) disease profile, (ii) ingredient profile, (iii) reference-mined targets, (iv) statistically inferred targets, (v) GO term profile, and (vi) KEGG pathway profile.

- For diseases, calculate the five kernels based on: (i) herb profile, (ii) ingredient profile, (iii) target profile, (iv) GO semantic similarity, and (v) disease MeSH semantic similarity.

- Normalize each kernel matrix and fuse them into a single, unified kernel for herbs ((KH)) and diseases ((KD)) using a weighted average approach.

Model Training & Prediction with Network Consistency Projection (NCP):

- Inputs: Unified kernels (KH), (KD), and the binary adjacency matrix of known HDAs ((A)).

- The NCP algorithm projects the herb and disease similarity information onto the association network. The prediction score matrix (S) is derived iteratively, quantifying how consistent a potential herb-disease pair is with both the known associations and the multi-source similarity information.

- The final output is a continuous score matrix (S), where a higher (S_{ij}) indicates a higher predicted probability of association between herb (i) and disease (j).

Validation & Evaluation:

- Perform five-fold cross-validation both globally (random pair split) and locally (by disease, leaving all associations for one disease out).

- Use standard metrics: Area Under the Receiver Operating Characteristic Curve (AUROC) and Area Under the Precision-Recall Curve (AUPR).

- Conduct ablation studies by removing specific kernels to assess their individual contribution to model performance.

Protocol 2: Experimental Validation of Network Proximity Predictions

Predictions from computational models require biological validation [17].

In Vitro Target Engagement:

- Objective: Confirm predicted herb components bind to or modulate the activity of key target proteins from the network proximity module.

- Method: Use techniques like surface plasmon resonance (SPR) or cellular thermal shift assay (CETSA) to measure direct binding or stabilization of target proteins in cell lysates treated with herb extracts or isolated compounds.

Functional Phenotypic Assays:

- Objective: Verify the predicted therapeutic effect on disease-relevant phenotypes.

- Method: Apply the herb extract or its active component to disease-relevant cell models (e.g., inflamed endothelial cells, cancer cell lines). Measure downstream effects such as cytokine secretion (ELISA), cell proliferation (MTT assay), or apoptosis (flow cytometry) to confirm modulation of the predicted pathway.

Omics-Level Validation:

- Objective: Provide systems-level evidence that the treatment alters the predicted disease network.

- Method: Perform RNA sequencing (RNA-seq) or proteomics on treated vs. untreated cells. Conduct pathway enrichment analysis (e.g., on KEGG or GO) to test if the differentially expressed genes/proteins are significantly enriched in the network neighborhood initially predicted by the model.

Model Visualization and Workflow

Diagram 1: Workflow of the AI-Enhanced HDA Prediction Model (HDAPM-NCP)

Diagram 2: Network Proximity Mechanism of Herb Action

Table 4: Key Research Reagent Solutions for Network Pharmacology Validation

| Reagent / Resource | Category | Primary Function in Validation | Key Features & Notes |

|---|---|---|---|

| HERB Database | Bioinformatics Database | Provides the foundational dataset of known herb-disease associations, ingredients, and targets for model training and benchmarking [18]. | High-throughput experiment-supported data; essential for constructing reliable positive/negative sample sets. |

| HIT 2.0 Database | Bioinformatics Database | Offers curated herb/compound-target interactions from literature mining, crucial for defining the 'Target' layer in the network [17]. | Manually reviewed data; reduces noise compared to purely computationally inferred target lists. |

| Human Protein Interactome (PPI) | Bioinformatics Network | Serves as the scaffold for calculating network proximity metrics between herb targets and disease modules [17]. | Quality and completeness are critical. Use high-confidence, non-redundant interactomes (e.g., from HI-union). |

| Recombinant Human Proteins | Wet-lab Reagent | Used in in vitro binding assays (SPR, ELISA) to validate direct interactions between predicted herb components and target proteins. | Requires purity and correct folding. Often tagged (e.g., His-tag) for purification and detection. |

| Pathway-Specific Reporter Assay Kits | Wet-lab Reagent | Validates functional modulation of predicted signaling pathways (e.g., NF-κB, STAT3) by herb extracts in cell models. | Provides a luminescent or fluorescent readout proportional to pathway activity; high sensitivity. |

| Validated siRNA or CRISPR Libraries | Wet-lab Reagent | Enables gene knockdown/knockout of predicted key target genes to confirm their mechanistic role in the herb's phenotypic effect. | Essential for establishing causality, not just correlation, in the identified network. |

| Multi-Plex Cytokine Assay Kits | Wet-lab Reagent | Measures the secretion profile of numerous cytokines from treated immune cells, validating predicted immunomodulatory effects. | Allows systems-level phenotypic validation aligning with network-level predictions. |

The deconstruction of the 'Herb-Component-Target-Disease-Pathway' network through integrated computational and AI frameworks marks a paradigm shift in natural product research. The model moves the field from descriptive listing of associations to a predictive, mechanistic science grounded in network theory. The kernel-based similarity fusion and network proximity principle provide a robust mathematical and biological basis for understanding and forecasting herbal efficacy [18] [17].

Future development hinges on several frontiers. First, the dynamic integration of temporal and spatial biological data will transform static networks into condition-specific models, capturing how herb effects vary across tissues or disease stages. Second, the application of generative AI and large language models (LLMs) holds promise for standardizing herbal knowledge from ancient texts and generating novel, optimized multi-herb formulations [3] [10]. Third, closing the translational loop is paramount. This requires tighter integration of model predictions with real-world evidence (RWE) from electronic health records and prospective clinical studies, ensuring the network hypotheses ultimately improve patient outcomes [10]. As these tools evolve, they will not only validate traditional knowledge but also systematically unlock the vast, untapped therapeutic potential within the global pharmacopeia of natural products.

The AI-Enhanced Toolkit: Workflows and Applications in Predictive Screening and Mechanism Elucidation

The discovery of therapeutics from natural products (NPs) is undergoing a paradigm shift, moving from a reductionist “one drug, one target” model to a holistic “multi-component, multi-target, multi-pathway” systems approach [19]. This shift is driven by network pharmacology (NP), an interdisciplinary field that integrates systems biology, omics technologies, and computational analysis to map the complex interactions between drugs, targets, and diseases [19]. NP is particularly suited for studying traditional medicine formulations and natural products, which exert therapeutic effects through synergistic actions of numerous compounds [10].

However, the high dimensionality, noise, and heterogeneity of pharmacological data pose significant challenges for conventional NP methods [10]. The integration of Artificial Intelligence (AI), including machine learning (ML), deep learning (DL), and graph neural networks (GNNs), is revolutionizing the field—giving rise to AI-driven network pharmacology (AI-NP) [10]. AI-NP enhances every stage of the computational workflow, enabling more accurate predictions of bioactive compounds, elucidation of complex mechanisms, and efficient prioritization of candidates for experimental validation [3]. This guide details the core computational workflow of modern NP and AI-NP, framed within the critical context of accelerating and scientifically validating natural product-based drug discovery.

Core Computational Workflow: A Three-Phase Framework

The systematic investigation of natural products via network pharmacology follows a structured pipeline comprising three consecutive phases: Data Collection, Network Construction, and Topological Analysis. This framework transforms raw, heterogeneous data into biologically interpretable insights regarding a natural product’s mechanism of action.

Diagram 1: The AI-Enhanced Network Pharmacology Workflow. This three-phase framework illustrates the integration of AI modules (red ellipses) into the core steps of data processing, network science, and biological interpretation [20] [10].

Phase 1: Data Collection and Curation

The foundation of any robust NP study is comprehensive and high-quality data. This phase involves aggregating heterogeneous data from multiple public databases and literature, followed by rigorous curation.

Key Data Types and Sources:

- Natural Product Compounds: Ingredients are retrieved from specialized databases such as the Traditional Chinese Medicine Systems Pharmacology Database (TCMSP) and the Encyclopedia of Traditional Chinese Medicine (ETCM) [19] [21]. Bioactive compounds are typically filtered by pharmacokinetic properties like Oral Bioavailability (OB ≥ 30%) and Drug-likeness (DL ≥ 0.18) [21].

- Target Identification: Protein targets for the filtered compounds are predicted using the same databases (TCMSP, ETCM) or tools like SwissTargetPrediction.

- Disease-Associated Genes: Targets related to the disease of interest are collected from disease genomics databases like GeneCards, DisGeNET, and OMIM [21].

- Omics Data: Public repositories like the Gene Expression Omnibus (GEO) provide transcriptomic datasets for differential expression analysis in diseased versus healthy states [21].

- Interaction Data: Protein-protein interaction (PPI) networks are constructed using resources like STRING to understand the cellular context of targets [19].

The AI Enhancement: AI addresses critical bottlenecks in this phase. NLP models automate literature mining to extract compound-target relationships. ML models integrate and clean heterogeneous data, impute missing values, and flag inconsistencies. For example, the NeXus platform automates the detection of format inconsistencies and duplicate entries during preprocessing [20].

Phase 2: Network Construction and Modeling

The curated data is integrated into a mathematical graph model, providing a visual and computational representation of the complex system.

- Network Types: The core model is typically a multi-layer “herb-compound-target-disease-pathway” network [10]. This includes:

- A compound-target bipartite network.

- A target-disease association network.

- A PPI network among the target proteins.

- These layers are integrated to show the complete therapeutic hypothesis.

Construction Tools: Platforms like NeXus automate this integration, generating unified networks from genes, compounds, and plants. In a validated case, NeXus constructed a network of 143 nodes and 1,033 edges from 111 genes, 32 compounds, and 3 plants in 1.2 seconds [20]. Other tools include Cytoscape (for visualization and analysis) and custom scripts in R or Python [19].

The AI Enhancement: Graph Neural Networks (GNNs) excel here. They can predict novel, missing interactions within the network (link prediction) and infer latent relationships between compounds and targets not present in existing databases, thereby completing the mechanistic picture [10].

Phase 3: Topological and Functional Analysis

This phase extracts biological meaning from the network structure through mathematical analysis and functional annotation.

Topological Analysis: Key metrics identify important elements:

- Degree Centrality: The number of connections a node has. High-degree nodes (“hubs”) are potential key targets or synergistic compounds.

- Betweenness Centrality: Identifies nodes that act as bridges between network modules, indicating critical communication points.

- Clustering Coefficient/Modularity: Measures how the network organizes into functional communities (modules). For instance, a network with a modularity score of 0.428 indicates strong community structure [20].

Functional Enrichment Analysis: Target genes within key modules or hubs are analyzed for over-represented biological functions. Standard methods include:

- Over-Representation Analysis (ORA): Uses threshold-based gene lists.

- Gene Set Enrichment Analysis (GSEA): Considers expression rankings without strict thresholds.

- Gene Set Variation Analysis (GSVA): Assesses pathway activity per sample [20]. Tools like clusterProfiler are used to query Gene Ontology (GO) terms and Kyoto Encyclopedia of Genes and Genomes (KEGG) pathways.

The AI Enhancement: AI transforms analysis from descriptive to predictive. Supervised ML models classify compounds as active/inactive or predict their therapeutic pathway. GNNs directly learn from the network structure to predict novel drug-disease associations or repurposing opportunities. Explainable AI (XAI) tools like SHAP help interpret these “black box” models [10].

Table 1: Performance Metrics of an Automated Network Pharmacology Platform (NeXus v1.2) [20]

| Analysis Stage | Dataset Size (Genes) | Processing Time | Memory Usage | Key Output Metric |

|---|---|---|---|---|

| Data Validation & Preprocessing | 111 | 0.5 seconds | Not Specified | 15 format inconsistencies, 3 duplicates resolved |

| Network Construction | 111 | 1.2 seconds | 124 MB | Graph with 143 nodes, 1,033 edges (Density: 0.102) |

| Centrality Calculation | 111 | 0.8 seconds | Additional overhead | Identification of hub nodes (15.3% of compounds with degree ≥5) |

| Full Workflow (Manual Comparison) | 111 | <5 seconds | 480 MB (peak) | >95% time reduction vs. manual (15-25 min) |

Case Study: Protocol for Anti-Inflammatory Mechanism Elucidation

The following detailed protocol, based on a study of the Qinghuo Rougan Formula (QHRGF) for uveitis, exemplifies the integration of the core computational workflow with experimental validation [21].

A. Computational Investigation

- Compound Screening & Target Prediction:

- Retrieve compounds for all herbs in QHRGF from TCMSP.

- Filter for active compounds using OB ≥ 30% and DL ≥ 0.18 criteria.

- Obtain predicted protein targets for these compounds from TCMSP and ETCM databases.

- Disease Target Acquisition:

- Search GeneCards with keywords “uveitis” and “immune” to gather known disease-associated targets.

- Network Construction & Analysis:

- Intersect compound targets with disease targets to identify putative therapeutic targets.

- Construct a “QHRGF-Compound-Putative Target-Uveitis” network using Cytoscape.

- Perform topological analysis to identify hub targets.

- Functional & Pathway Enrichment:

- Submit hub targets to enrichment analysis (e.g., DAVID, Metascape) to identify significantly enriched KEGG pathways (e.g., TNF, NF-κB signaling).

- Transcriptomic Integration (WGCNA):

- Download uveitis-related transcriptomic dataset (e.g., GSE7850) from GEO.

- Perform Weighted Gene Co-expression Network Analysis (WGCNA) to find disease-correlated gene modules.

- Overlap module genes with putative targets from Step 3 to refine key targets.

- Predictive Modeling & Docking:

- Use a machine learning model (e.g., LASSO regression) on the refined target list to identify a diagnostic biomarker panel.

- Validate binding affinities between key compounds and hub targets via molecular docking (e.g., AutoDock Vina).

B. Experimental Validation Protocol

- Preparation of QHRGF Decoction: Weigh and mix herbal components. Perform decoction twice with water (10x and 8x volume), combine filtrates, concentrate, and granulate [21].

- Quality Control via HPLC: Use High-Performance Liquid Chromatography (HPLC) to quantify marker compounds (e.g., baicalin, gentiopicroside). Conditions: C18 column, mobile phase of acetonitrile and 0.1% formic acid, detection at 254 nm [21].

- In Vivo Validation: Induce uveitis in an animal model (e.g., rat). Administer QHRGF as the treatment group versus a model control and a normal control. Measure clinical inflammatory scores and, upon sacrifice, analyze ocular tissues for:

- Expression levels of hub targets (via qPCR or Western Blot).

- Levels of key inflammatory cytokines (e.g., TNF-α, IL-6 via ELISA).

- Histopathological examination (H&E staining).

Diagram 2: Integrating Computational Prediction with Experimental Validation. The workflow shows how hub targets and pathways identified via network pharmacology (top) directly guide the design and analysis of in vivo experiments (bottom) to confirm the therapeutic mechanism [21].

Table 2: Research Reagent Solutions for Network Pharmacology Studies

| Category | Item / Resource | Function / Purpose | Example / Specification |

|---|---|---|---|

| Chemical & Herbal Reference Standards | Marker Compounds (e.g., Baicalin, Gentiopicroside) | HPLC quantification for decoction quality control and experimental dosing [21]. | Purity ≥98% (HPLC grade). Used to establish standard curves. |

| Bioinformatics Databases | TCMSP, ETCM | Source for natural product compounds, ADMET properties, and predicted targets [19] [21]. | TCMSP filters: OB≥30%, DL≥0.18. |